This article walks through how an individual Dooder learns in a simulation. For an overview of the project go to the GitHub repository.

The Experiment

In this experiment, I conducted a simulation consisting of 100 cycles within a 5x5 grid environment, using a single Dooder. The energy within the environment was randomly added and removed, ensuring that there were no more than 10 energy objects in the environment during any given cycle. In a future experiment, I plan to assess the results of multiple simulations using the same parameters.

This analysis will provide insights into the number of Dooders that successfully complete the entire 100 cycles and how closely their behaviors align. The focus of this experiment revolves around the Dooder’s movement model, which serves as a foundational framework for its energy-seeking behavior.

Perception State

The primary objective of a Dooder is to search for energy, as it is essential for its continued existence. If a Dooder remains without energy for an extended period, its chances of termination increase with each passing cycle. However, by effectively learning to locate and acquire energy from the environment, it can significantly prolong its lifespan.

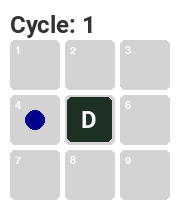

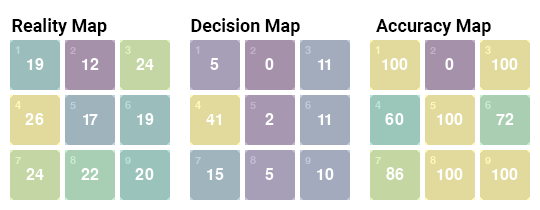

Energy is generated within a digital environment during each cycle, creating a random and dynamic energy landscape. By referring to Figure-1, you can observe the perception state of the Dooder during the initial cycle. The perception state represents the Dooder's perspective, including its own state as well as that of the neighboring spaces.

A Simple Neural Network

In this particular scenario, there is energy located in space #4 to the left of the agent. This information is the only data available to the Dooder during its first cycle1. Through a process of "trial and error", the Dooder gradually learns to accurately identify spaces containing energy and subsequently moves towards those spaces (provided it can survive long enough to learn to do so). This learning process is facilitated by an algorithm known as back-propagation, which enables the Dooder to update its neural network. The neural network serves as the repository for the Dooder's knowledge and experience2.

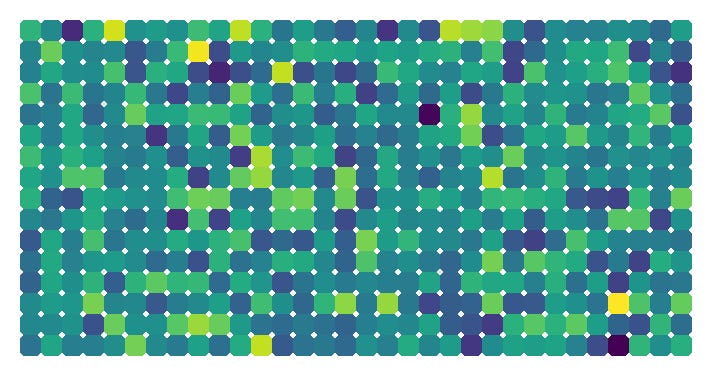

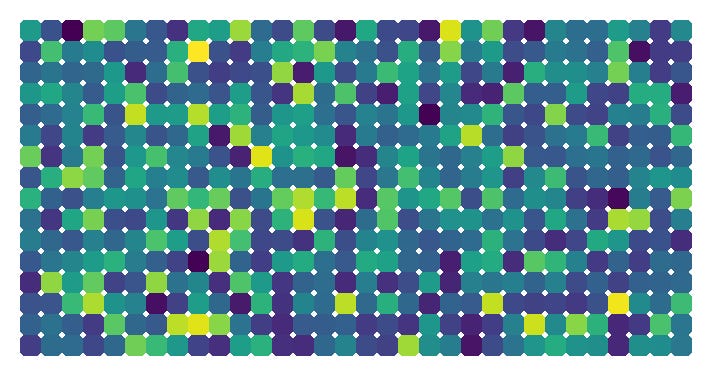

To accomplish its energy identification task, the Dooder's neural network consists of 1,042 neurons3. By comparison, the human brain contains approximately 86 billion neurons. Referencing Figure-2, you can see a representation of a single neuron within the Dooder's neural network, at the start of the experiment. The initial values of the neurons are assigned randomly and will undergo updates as the Dooder learns and moves within the environment.

Signs of Learning

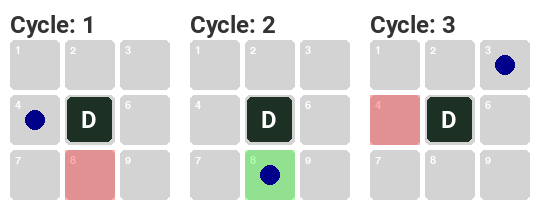

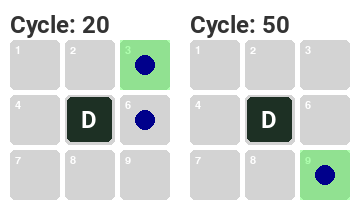

Figure-3 illustrates the Dooder's progression over the first three cycles, displaying the presence of energy and the agent's corresponding movement choices. Initially, the Dooder's choices are relatively random since it lacks sufficient information to accurately determine the locations of energy. However, by referring to Figure-4, you can observe the noticeable improvement in the Dooder's ability to identify energy as the cycles progress. The Dooder becomes increasingly accurate in its energy identification as it gains more experience.

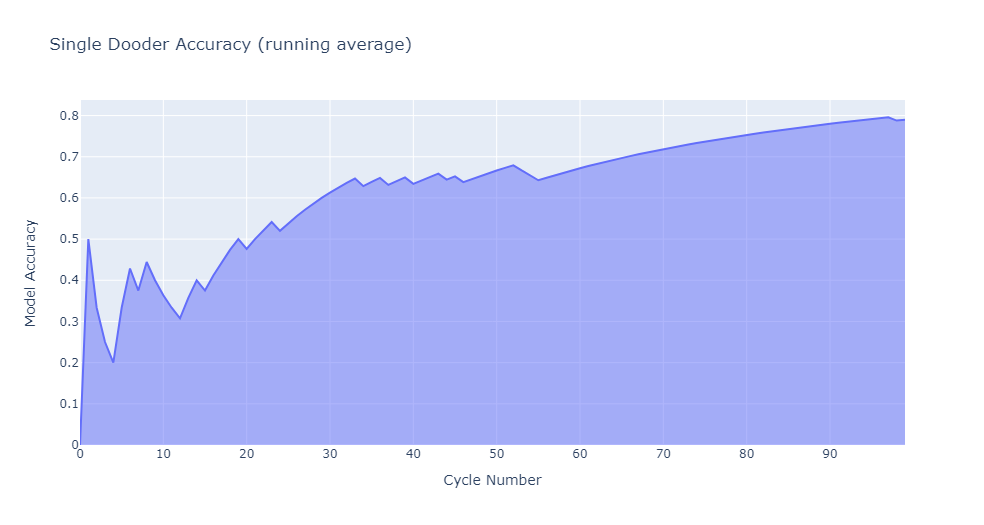

This particular Dooder demonstrated remarkable performance by successfully completing the simulation, reaching 100 cycles with an average accuracy of 78%. This figure indicates that the Dooder correctly identified the presence of energy in a neighboring space approximately 78% of the time. By referring to Figure-5, you can visually observe the progressive improvement in accuracy as the Dooder gained experience over time. The Dooder's accuracy steadily increased, showcasing its ability to learn from the environment.

Analysis

For a comprehensive understanding of the Dooder's performance, Figure-6 provides insight into the actual locations of available energy, the Dooder's movement decisions, and the corresponding accuracy in relation to the neighboring spaces at each cycle. An interesting observation is that energy availability in space #2 was consistently low throughout the simulation, resulting in the Dooder never selecting that particular space as a movement destination.

This observation highlights the Dooder's ability to adapt and learn from its experiences, as it effectively recognized the lower likelihood of energy presence in space #2 and consistently avoided moving to that space. The Dooder's decision-making process demonstrates its capacity to make informed choices based on historical patterns and optimize its movement strategy.

By the conclusion of the simulation, the Dooder displayed an impressive transformation, progressing from a state of complete ignorance of the environment to successfully learning how to identify energy in neighboring spaces. Figure-7 provides a compelling visual representation of this progress, showcasing the notable changes that occurred in the same single neuron compared to its initial state depicted in Figure-2. Moreover, Figure-8 further illustrates the dynamic evolution of this neuron throughout the simulation, capturing the essence of its adaptation and learning process.

Observations and Takeaways

This implementation is a basic illustration of the capabilities of neural networks. Real-world applications often involve considerably more complexity, incorporating higher-dimensional input data and intricate patterns that are much less apparent.

Energy density is significant in determining the learning capacity of a Dooder. In a future article, I will delve further into the correlation between "adversity" and the agent's ability to learn effectively.

Success is not guaranteed as many iterations of the experiment did not see a Dooder make it to 100 cycles. At some point, I will look at the impact of initial conditions to the experiment and verify if some Dooders are “doomed to fail”.

Footnotes

The movement model receives input data in the form of a numpy array. In the given example depicted in Figure-1, the perception array is represented as [0, 0, 0, 1, 0, 0, 0, 0, 0], where the presence of energy is indicated by the value '1' in the 4th space. As the project advances and additional complexity is introduced, the input data provided to the model will evolve.

The neural network I used is a Python-based fully-connected multilayer perceptron, utilizing the numpy library. It is a straightforward and traditional neural network design. This architecture is adapted from the incredible book "Neural Networks from Scratch" authored by Harrison Kinsley, known as Sentdex.

The network architecture comprises of two hidden layers and produces an output of nine values, indicating the designated location for the Dooder's movement. The position to which the agent will move is determined by selecting the argmax of that output.